The digital asset landscape of 2026 is no longer defined solely by price action, but by mathematical accountability. For years, the “Black Box” model dominated high-complexity crypto ecosystems—specifically within the iGaming and decentralized finance (DeFi) sectors—where end-users were forced to navigate opaque terms, predatory rollover hurdles, and hidden redemption clauses.

However, as Bitcoin matures into a global reserve asset, the demand for institutional-grade transparency has birthed a new industry standard: The Transparency Meta. By leveraging Explainable AI (XAI) and blockchain-native data, new frameworks are finally allowing retail participants to see exactly what lies beneath the hood of promotional liquidity.

The Shift from “Trust Me” to “Audit Me”

Historically, the iGaming sector operated on a “trust-based” model. Platforms would offer massive incentive structures to attract users, only to bury insurmountable redemption requirements in the fine print. These “liquidity traps” often made it statistically impossible for the user to ever realize a return, effectively acting as a slow bleed of capital.

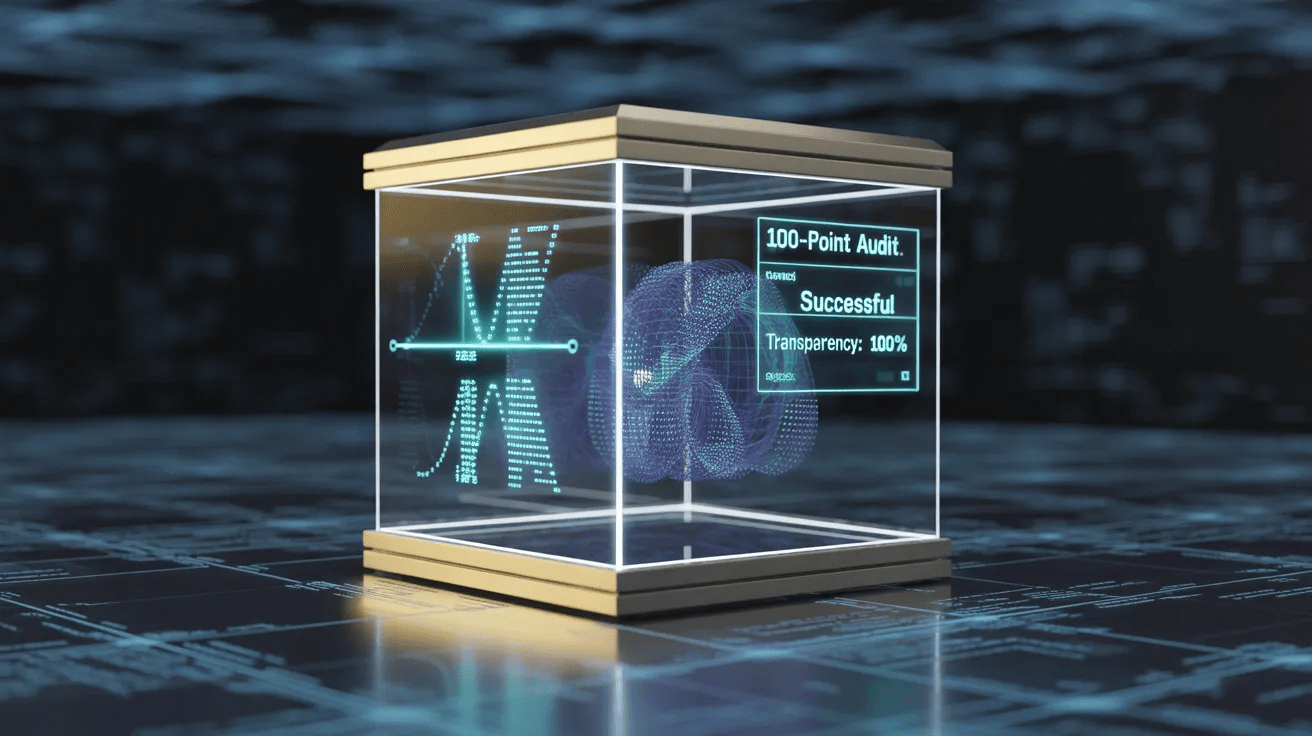

In 2026, the narrative has shifted toward Explainable AI (XAI). Unlike traditional algorithms, XAI doesn’t just provide a result; it provides a justification. In the context of platform incentives, AI models now scan thousands of lines of “Terms and Conditions” in real-time to calculate the “True Value” of an offer. This shift is moving the industry toward “Glass Box” platforms where every promotional promise is backed by verifiable data.

The Mechanics of Algorithmic Deception and the AI Response

To understand why the Transparency Meta is necessary, one must look at the evolution of “Algorithmic Deception.” In the early 2020s, platforms utilized “Sticky Liquidity” models where user capital was mathematically tethered to the platform through layers of obfuscated code. This wasn’t just a matter of hidden text; it was a matter of game theory. Platforms designed incentive structures that utilized a “Negative Expected Value” ($-EV$) loop, specifically engineered to exploit the psychological momentum of the end-user. By the time a participant realized the redemption hurdles were insurmountable, their capital was already “locked” within the protocol’s ecosystem.

The AI-driven response in 2026 utilizes Natural Language Processing (NLP) and Heuristic Analysis to deconstruct these loops. Modern auditing tools don’t just read the terms; they simulate thousands of “user journeys” to determine the statistical probability of a participant successfully navigating the protocol’s requirements. This transition from static reading to dynamic simulation represents a paradigm shift in consumer protection. It forces platform architects to design “Symmetry of Information” models, where the house edge is transparently disclosed and mathematically verified before a single satoshi is deposited. As these simulations become more accessible to the average investor, the market is effectively “pricing out” platforms that rely on deception, leading to a flight to quality across the iGaming and broader digital asset space.

Quantifying Protocol Risk

The most significant breakthrough in this meta is the emergence of standardized risk scoring. As data-driven oversight becomes the industry standard, specialized frameworks are emerging to quantify protocol risks. The AI-grading methodology used by FreeCryptoBonus serves as a primary example of this shift, utilizing a 100-point auditing scale to break down the mathematical viability of promotional liquidity for retail participants.

By assigning weighted values to variables such as rollover multipliers (30%), expiry windows (15%), and hidden restrictions (20%), these AI-driven audits strip away the marketing. They provide a “vulnerability score” that helps users distinguish between a genuine growth incentive and a predatory capital sink.

The move toward a standardized 100-point scale is not merely for ease of use; it is a sophisticated weighting of protocol health. For an audit to be considered “institution-grade” in 2026, it must account for more than just the surface-level multiplier. The AI grading methodology looks deep into the interoperability of terms. For example, a “low-multiplier” incentive may appear favorable on the surface, but if the AI identifies “Restricted Asset Clauses” that exclude 90% of the platform’s high-liquidity games or markets, the score is significantly penalized. This level of granular analysis was impossible for manual researchers but is now a standard output of high-speed machine learning models.

Furthermore, these AI audits prioritize the “HODLer’s Advantage”—a critical metric for the DigitalCoinPrice audience. In the past, many platforms forced a “Currency Conversion” trap, where Bitcoin deposits were instantly liquidated into fiat or stablecoins to mitigate the platform’s price risk. This stripped the user of any upside during a market rally. Current AI frameworks now explicitly flag “forced conversion” as a high-risk protocol failure. By assigning a heavy weight to “Native Asset Retention,” these grading systems ensure that participants can grow their stacks in $BTC$ or $ETH$ without sacrificing their long-term market position. This convergence of data science and financial ethics is what differentiates a 2026 “Five-Star” platform from the predatory models of the previous decade.

Provably Fair 2.0: Beyond the Random Number Generator

While “Provably Fair” technology was the first step in blockchain transparency, it only addressed the outcome of a single event. The Transparency Meta of 2026 goes further by auditing the entire lifecycle of a user’s interaction with a platform.

- Automated Term Monitoring: Smart contracts now allow for “Real-Time Terms Auditing,” where any change in a platform’s protocol is instantly flagged by AI aggregators.

- Liquidity Verification: Retail participants are increasingly demanding proof of reserves and liquidity depth before engaging with any high-yield or incentive-heavy environment.

- Algorithmic Accountability: Regulators and third-party auditors are now using the same AI tools as developers to ensure that the “mathematical edge” advertised by a platform aligns with the actual user experience.

From a macroeconomic perspective, the “Transparency Meta” is acting as a massive liquidity magnet for the blockchain industry. Institutional capital and high-net-worth individuals, who previously viewed the iGaming and decentralized incentive space as a “Wild West” of unquantifiable risk, are now entering the market.

This trend is also fostering a new era of Regulatory Harmony. Instead of blunt-force bans, forward-thinking jurisdictions are now using these AI grading frameworks to set “Minimum Transparency Standards.” By requiring platforms to publish their terms in a machine-readable format, regulators can automate oversight, ensuring that any protocol that deviates from its “fair play” mathematical model is instantly flagged and de-listed from major aggregators. In this sense, the technology is not just protecting the individual user; it is cleaning up the entire reputation of the blockchain sector, moving it away from its “fringe” origins and toward its destiny as the foundational layer of the global digital economy.

The Future of Participation

The death of the “Black Box” is a net positive for the entire blockchain ecosystem. When platforms are forced to compete on transparency rather than the size of their (often unattainable) rewards, the quality of the service improves.

As we move deeper into 2026, the success of a digital platform will not be measured by its marketing budget, but by its auditable integrity. In an era where data is the most valuable commodity, the tools that help users verify that data are the true gatekeepers of the new digital economy.